AI-Augmented Design Intent Sketch Tool

post-hardcore"The model is not the author. Your hand is the origin of every mark."

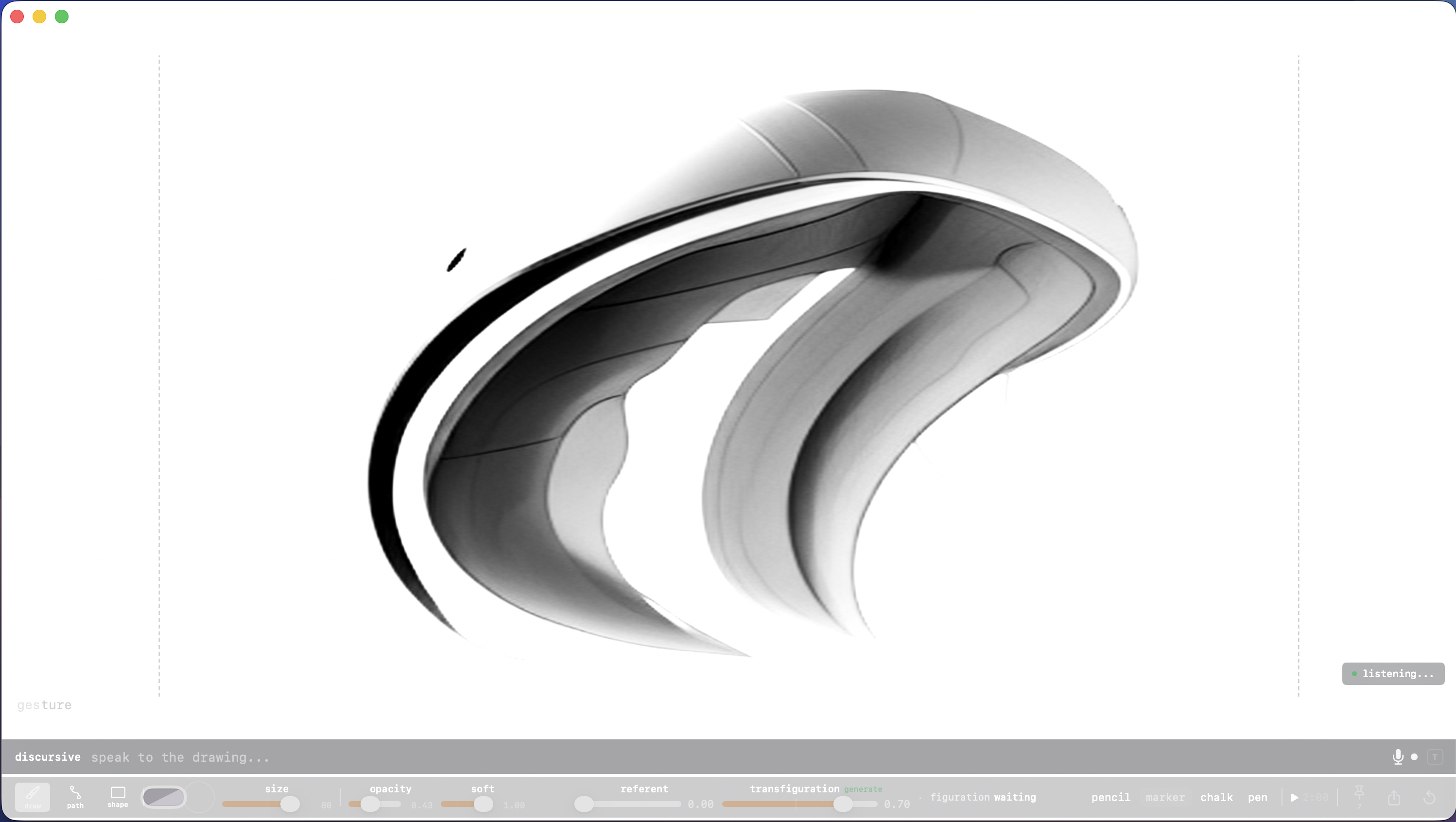

Draw-with-image, not prompt-to-image. Gesture is a blank rectangle that waits for your first mark to dirty it up. It refines it, you move a slider and your mark gets darker or disappears, you make another mark and the LLM refines or extends it. Activate text or voice input and your verbal prompts subtly adjust the drawing — if the transfiguration slider is high enough. Make it quicker, make it hotter, it's too damned sharp and it's about to cut me — help!

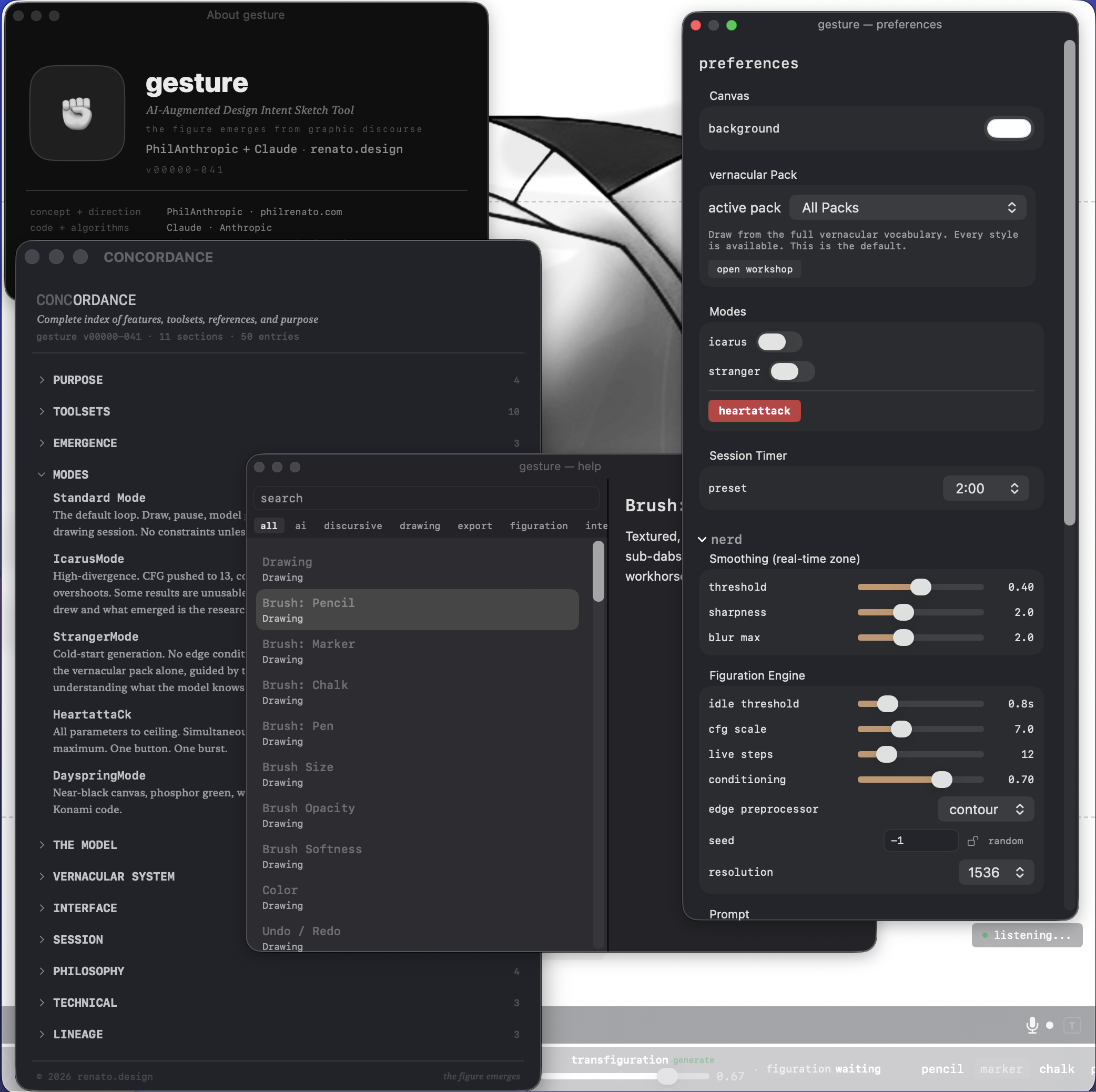

My largest app by code line count, which means little to me other than the tokens I burned to make it. macOS native, pressure-sensitive tablet capable. It only has a few brushes, it's not meant to compete with the big kids (yet.) But it can draw and edit vector splines and Bezier handles for precise editing if you want them. It uses Core ML Stable Diffusion, was trained with about eleven vernacular packs (but you can extend by feeding it your own drawings.) You can capture images for exporting out all at once. And like all my apps, gesture has a rich Concordance panel in addition to full help and tooltips. I am constantly reminding Claude to update that stuff — it probably thinks I care more about the documentation than the content — and it may be right.

Every stroke is a commitment and every inference is a provocation.

You draw. Your voice adds design vocabulary while your hands stay on the surface. The transfiguration slider controls how much the model transforms your marks — from a whisper of refinement to aggressive reimagining, capped at 0.85 because your hand should always be visible in the result. Pin what you like. Export the batch. Repeat until the drawing is smarter than you expected.

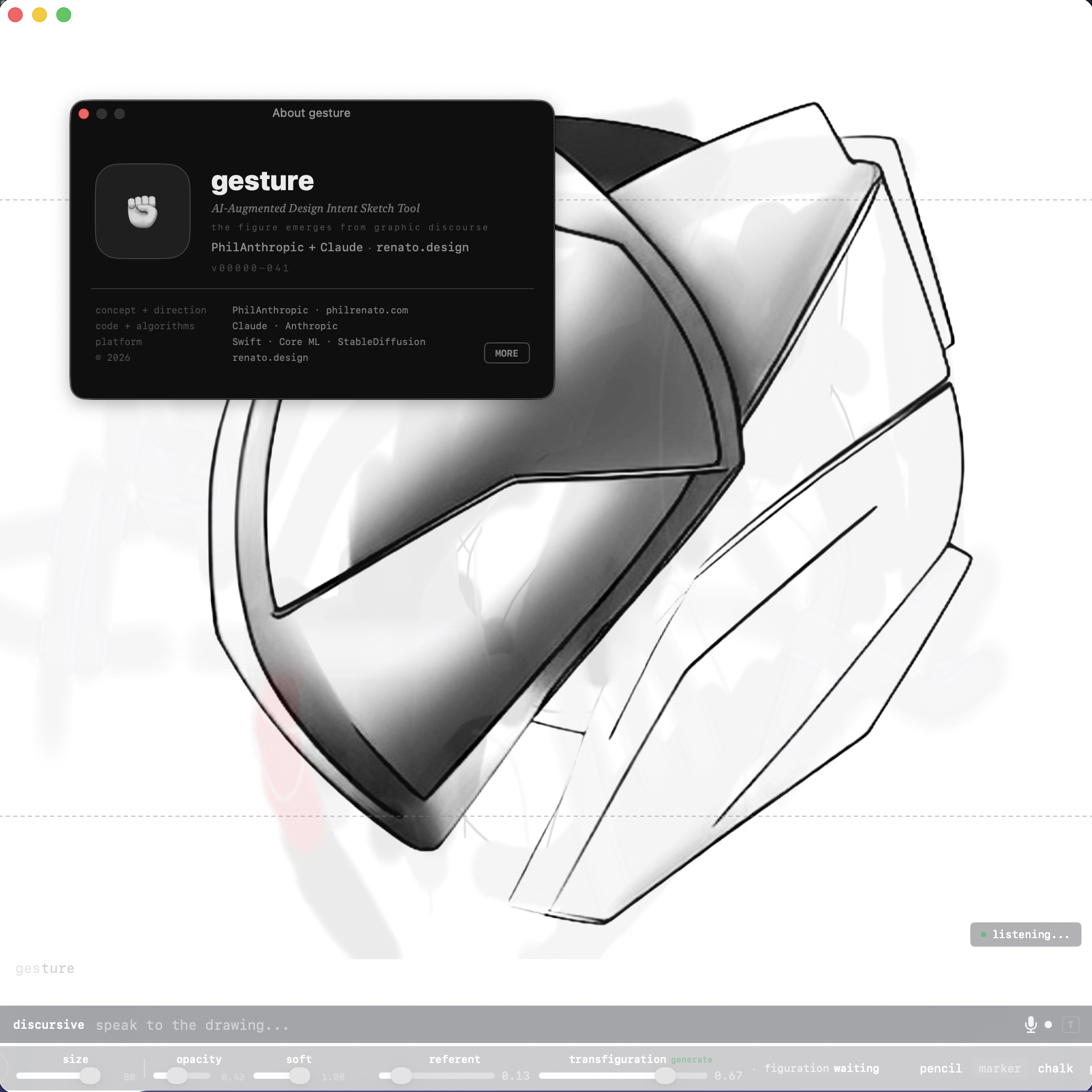

About panel. PhilAnthropic + Claude. renato.design.

Concordance, Help, Preferences. Every feature documented. Every parameter exposed.

Three input lanes. A figuration prompt overlay for direct prompt editing on canvas. A DisCursive bar with 350+ design vocabulary terms across twelve domains — elements, principles, edge character, composition, rendering, vernacular, affect. Voice input with on-device speech recognition, continuous cycling, 1.5-second silence auto-commit. Your hands never leave the surface.

The vocabulary engine. Not a chatbot. A design vocabulary. Say "aggressive contour, cross section, knife edge" and it knows exactly what that means without asking a cloud. 350 terms resolve instantly. Unknown phrases route through a local LLM for rewriting. Everything runs on your machine.

Brushes and splines. Pencil, marker, chalk, pen, blueline annotation (visible to you, invisible to the model), eraser. Pressure-sensitive. Catmull-Rom interpolation. Fifty levels of undo. Spline editing with anchor points and Bezier handles for when the gesture needs precision.

Modes for when you mean it. IcarusMode pushes divergence to the ceiling. StrangerMode starts cold with no conditioning. HeartattaCk sets every parameter to maximum. DayspringMode is a Konami Code easter egg. You'll know when you find it.