murmur™

a resonance instrument

lowercase ambient"A tuning fork, gesticulation, synthesizer, a television playing a scene that no one scripted or even acted out."

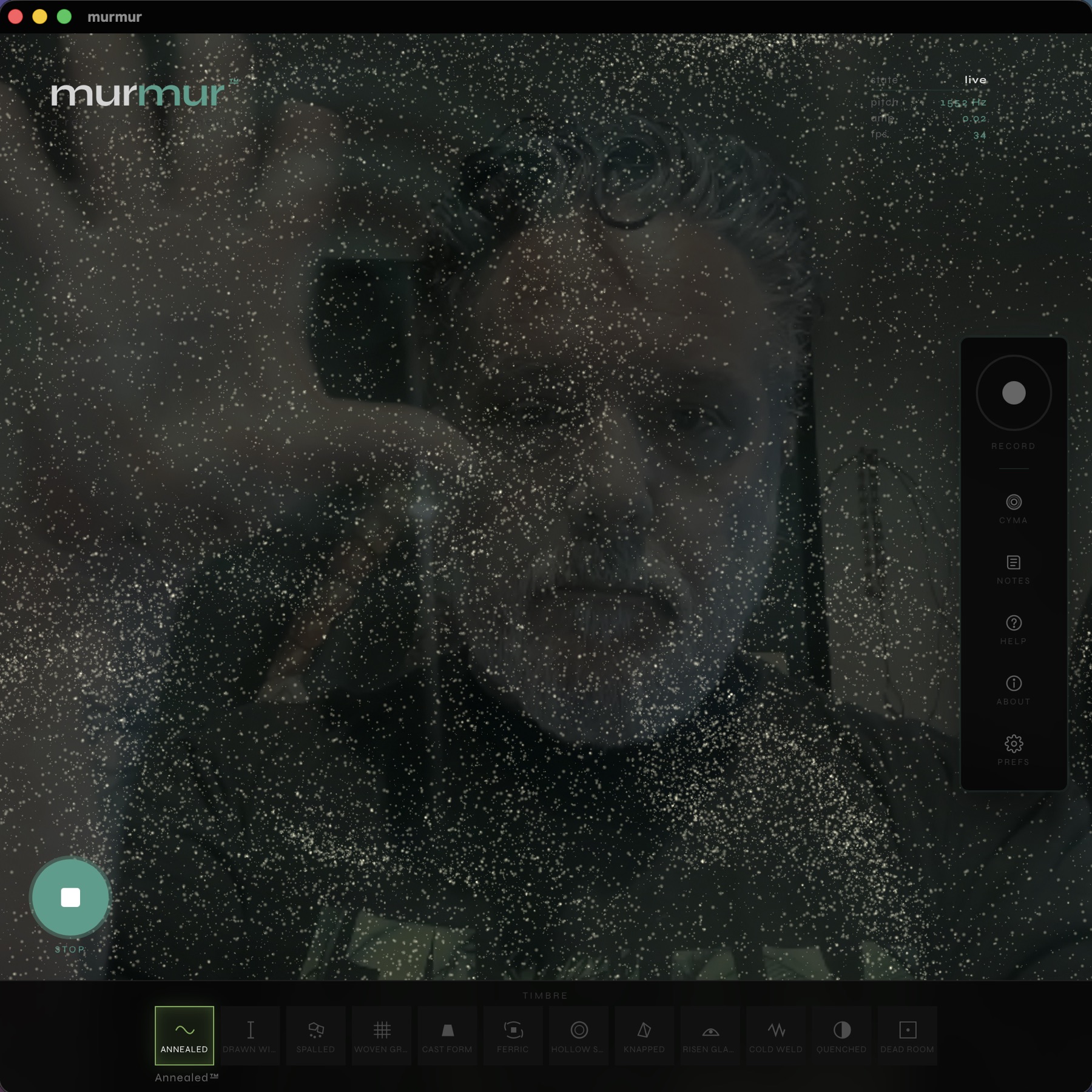

A software Chladni plate. Sing into your laptop and wave at it, and a four-layer physics surface tells you, in real time, what your sound actually is. Not what it should be. What it is.

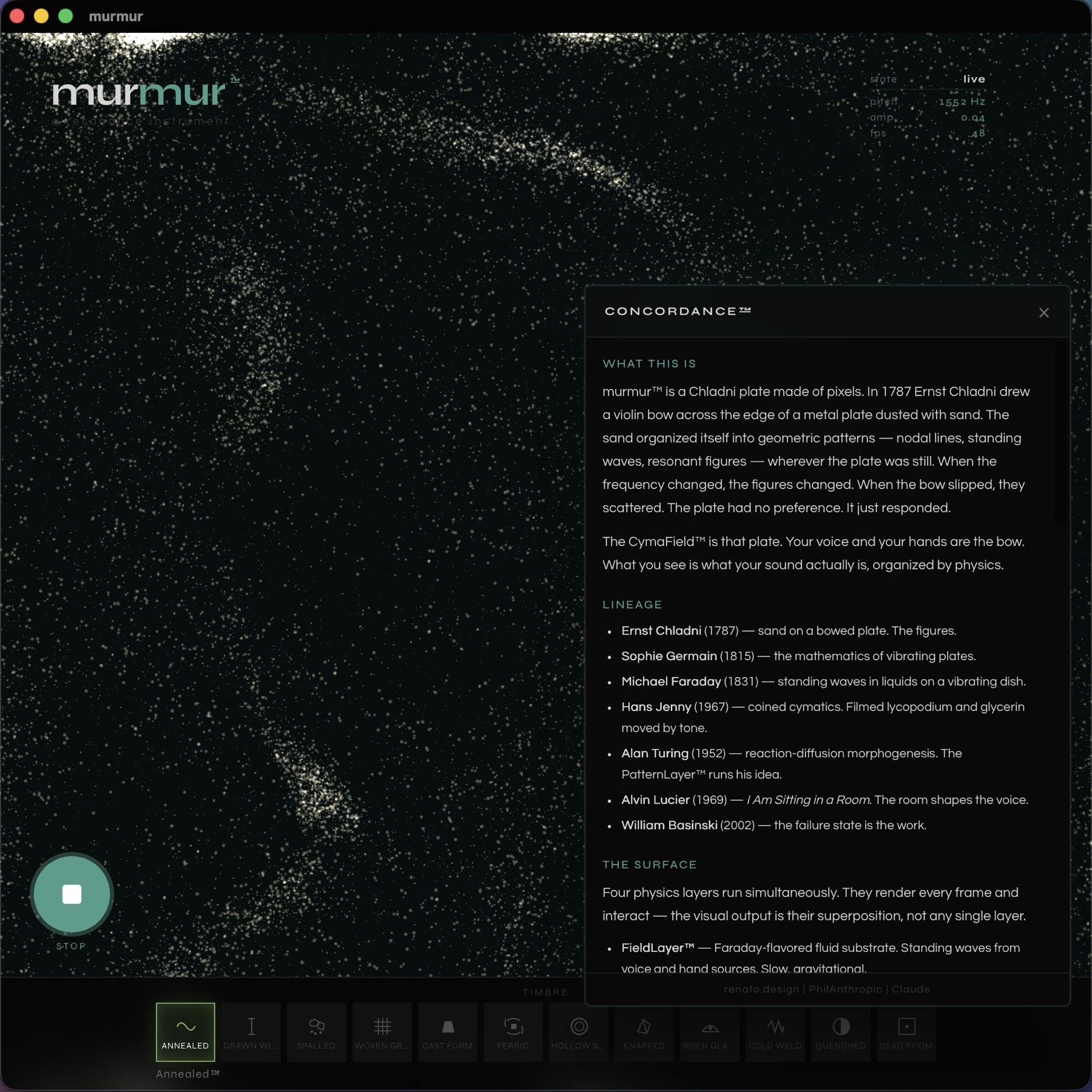

In 1787 Ernst Chladni dragged a violin bow across a metal plate dusted with sand and the sand jumped into geometry. The plate had no opinion about music. It just responded. murmur is that plate, with a few centuries of physics catching up.

It is not a music generator. It is not a visualizer. It is not a game. It is a quasi-scientific instrument that happens, as a side effect of being played, to produce something you could call a composition and something you could call an image. You make both by doing something with your mouth and your hands. The app holds still and watches.

Physics is the artist. I just let it draw.

Two channels. They cooperate, mostly.

VoiceChannel listens to your microphone — pitch, amplitude, spectral centroid, zero-crossing rate. It does not change your voice. It does not auto-tune you. It writes down what your voice was and hands the notes to the synthesizer. You are not the output. The output is what the instrument made from you.

GestureChannel watches your hands through your webcam. The camera feed is not displayed (unless you turn it on for documentation purposes) — your hands are data, not video. Position is timbre. Depth is space. Velocity is a wave. Pinching is a request, politely phrased, for the field to crystallize.

MurmurTone is the synthesizer. Not a guitar, not a piano, not an instrument simulator. Closer to a metal plate being excited by a transducer. Or a wine glass held at its resonant frequency. Or a theremin processed through a piece of stone. The synthesis sounds like something that has a body, not like something that is playing a body.

Twelve material presets, named for material states, not instruments — Annealed, Drawn Wire, Spalled, Woven Ground, Cast Form, Ferric, Hollow Shell, Knapped, Risen Glaze, Cold Weld, Quenched, Dead Room. Hold two at once and the space between them, located by your hand spread, is a valid destination.

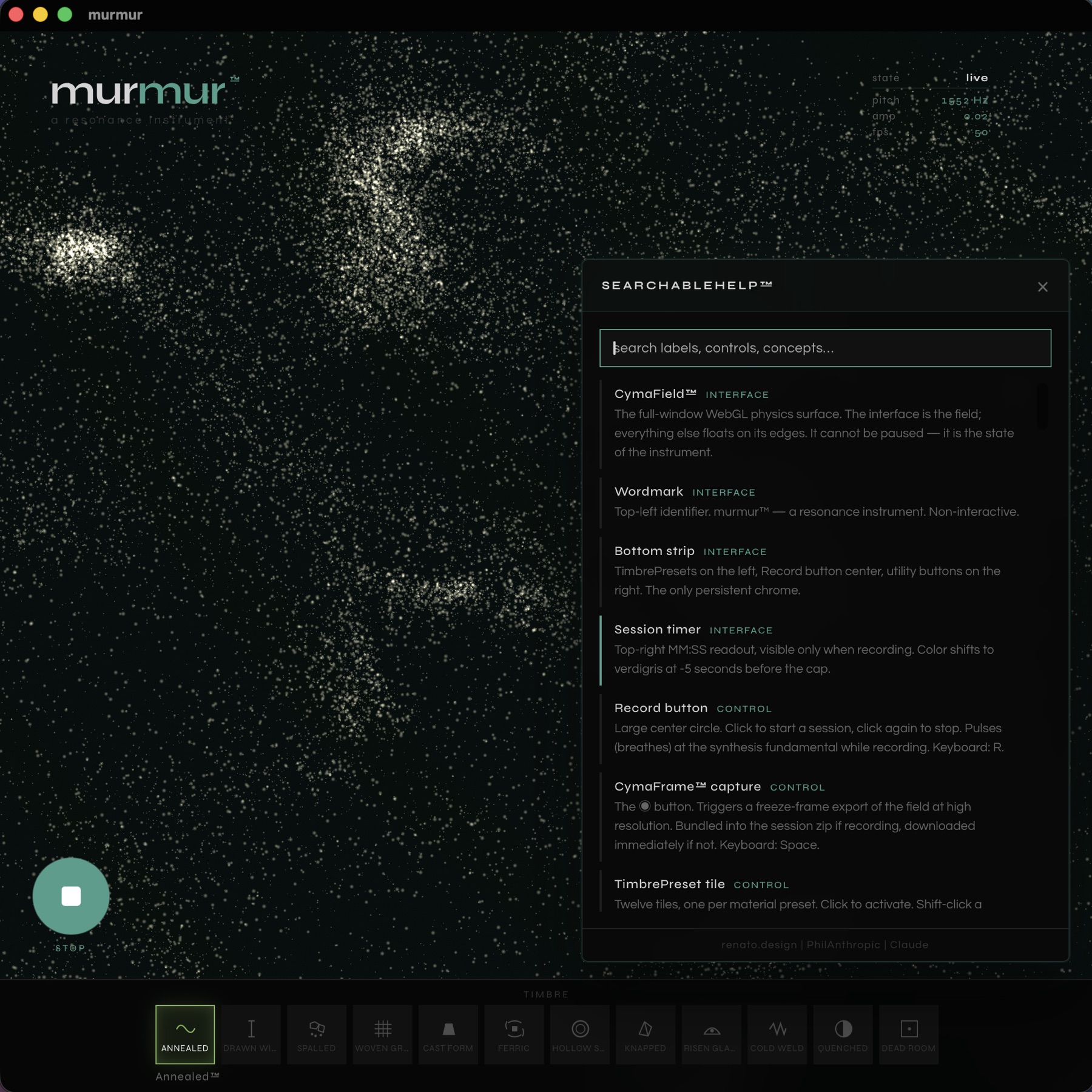

The CymaField is the interface. Everything else is a tool placed at its edges, and the edges know their place.

Four physics layers, all running, all the time, all interacting. What you see is the superposition.

FieldLayer is a Faraday-flavored fluid film, slow and gravitational. PatternLayer is a Gray-Scott reaction-diffusion system that crystallizes into Chladni figures on stable pitch and dissolves into turbulence on noise. GrainLayer is sand — thousands of grains carrying their own phase and rate, drifting toward nodal lines when the surface stabilizes and scattering when something hits hard. MembraneLayer is the displacement layer — hand depth pushes local dimples into the surface, hand velocity sends traveling waves across it.

When the sound is coherent, the surface organizes. When it isn't, it doesn't. Both states are true. Neither is a failure.

Every session produces a small bundle of artifacts:

A LoopFile — the synthesized audio of your performance, rendered as 16-bit PCM WAV. Not your voice, but what the instrument made from your voice.

Optional video — the field captured at sixty frames per second, H.264, alongside the audio. A real-time documentary of your performance.

Any CymaFrames you captured along the way — high-resolution PNGs of the surface at the moments you froze it. A photograph of sound that has been organized into form.

A SessionSlate — the parameter stream of the entire session at thirty samples a second. Not a score, but enough to argue with later.

All four bundle into a single zip. Filenames don't use timestamps — they use a vocabulary. murmur_glassine-drift.zip. CymaFrame_palimpsestual-eddy.png. The kind of thing.

murmur exists in two forms — the same instrument, twice — and you should not feel a difference between them when you are playing.

The web version runs in your browser. No install. No account. Audio and camera data never leave your machine — the synthesis is Web Audio, the shaders are WebGL, the hand tracking is MediaPipe Hands loaded from a CDN. Total payload is smaller than most websites' analytics scripts. Click a link. Make a sound.

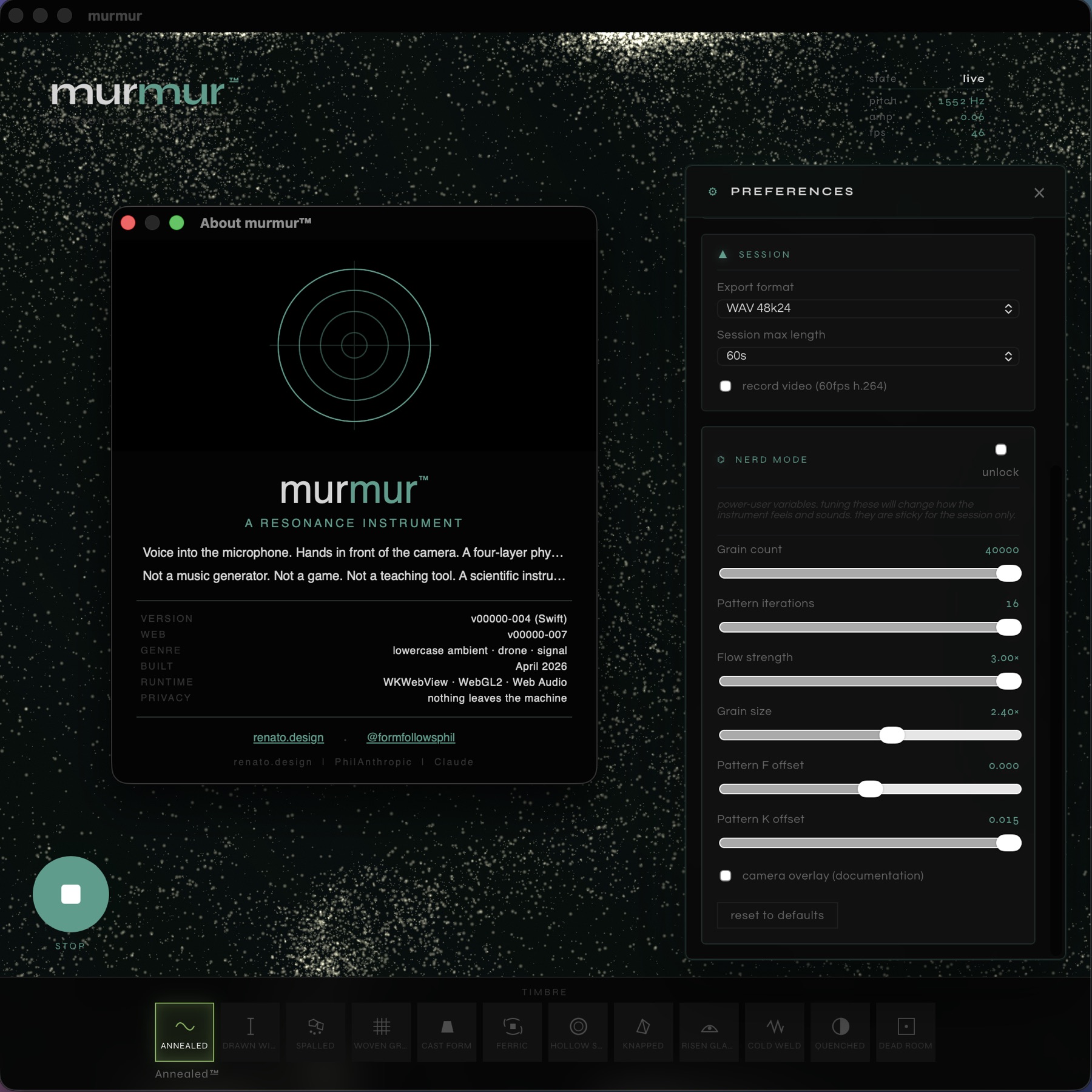

The Mac version is the web version inside a native window. Same field, same shaders, same colors, same physics. What it adds: a real macOS menubar with proper keyboard shortcuts, native About / Preferences / Concordance / Help windows, a dock icon, traffic lights, a draggable title bar, and the kind of small comforts that justify an .app instead of a bookmark.

murmur is a mirror. You are what it shows you. Sometimes that is beautiful, sometimes it is interestingly chaotic, and sometimes it is just sand on a plate that has stopped vibrating.

The CymaField does not have a preference.

The Mac version is signed with the renato.design Developer ID, notarized by Apple, and stapled. Both the .app and the .dmg pass Gatekeeper as "Notarized Developer ID." On first launch you should see exactly one prompt — "Murmur is from Phillip Renato. Open?" — and then it runs. No "unidentified developer." No System Settings detour.

The web version runs entirely in your browser. The microphone and camera are accessed through standard browser APIs and never leave your machine. There is no analytics script. There is no server.