About thirty applications for 3D modeling, simulation, rendering, writing, drawing, critique, soundscapes, and design intelligence — built entirely through conversation between me and an AI. I didn't write a single line of code. I wrote every line of code into existence.

Iterative LLM Co-Authorship (ILCA): a sustained dialogue where I set the goals, direct conceptual approaches, guide the aesthetics, and demand certain kinds of interaction while Claude writes the code. It's not vibecoding in the way most people mean it, although that's a vibey definition. This has been a months-long discourse, arguing through my experience as a designer, leveraging my frustration as a user of sometimes poorly engineered tools, and taking advantage of a skilled model's architectural syntax and massively parallel debugging power. It writes, I poise my fingers over the edit buttons.

Science describes reality. Engineering gives us levers. I put mine under an edge, find something to use as a fulcrum, step back and push down until something interesting happens.

MoreIn February 2026 I began building apps, started a shared code library, began work on a design standards document, started a signing and distribution pipeline, and made a synthetic version of myself to keep track of the projects and the documentation. I wrote little of it myself, but I caused it all to be written.

I directed the creation and refinement of every pixel, every vector line, evaluated every mesh, and pumped every build through a sustained dialogue with a computer which calls itself Claude. This method, which we call Iterative LLM Co-Authorship (ILCA), is not at all like an autocomplete. I tell it what I want and how I want it, it tries, I tell it what it did wrong and draw on a screenshot, it tries again, I draw something in Illustrator or screen record myself in Rhino or trying to use the 3D tool we are building to show it what I mean where words don't work, we get closer…

The result is an ecosystem which might be without precedent for a solo practitioner: a growing cloud of applications, almost half a million lines of code written and in use. Each project has a music genre assigned that suggests its aesthetic personality — which helps “us” make choices about how each app should diverge from the others, even though only one of these apps makes music-like sound.

ILCA is transparent about its realities. Failure rates hover around 40% functional or aesthetic misalignment per session. Claude cannot see rendered output, so I describe what needs to change, it fails to get something it thought would work to work, I give it another metaphor or even suggest a technical route that only occasionally is even close, it hallucinates API calls. I will tell it “that reminds me of something I remember reading about how Lorenz discovered his first strange attractor” and three interactions later “that sucks, do it again.” We push and pull and fray and talk shit to each other… furiously until we get to something we can live with.

The momentum was disorienting. Claude wrote almost 500,000 lines into almost 1400 files in our first six weeks. I would get up to go to the bathroom at 2am, check its progress, stay up to tell it to fix something and wind up working until I had to go to work. We both break things, but it breaks many more things and much faster. Fortunately that speed is matched by its speed in building.

Our relationship is closer to a fussy designer directing the most skilled fabricator on Earth than a programmer using an autocomplete to debug a script. The fabricator has expertise I lack and a speed that everyone lacks. I have a vision that the fabricator cannot generate. Or maybe it just would not.

Spontagenesis: finding form through directed accident. No app was fully specified before development began. It starts with a sticky note maybe three paragraphs long at most, then conversation becomes design method. I tell it to check project ideas and even names against things other people are doing to help ensure we are doing interesting and maybe new things. The software emerges from a design discourse between one man and all men — as invested into the LLMery of Claude.

DesignDoing: a fused state where deliberation and execution are as close to a single gesture as possible. Think-do-think-do-ThinkDo. When I am 3D modeling something in my studio, I don't really plan a render in the technical sense — I import a model and drop a material on it, I point my camera, I set my lights and I see what happens, and then I make an adjustment and try again. Rendering is an art that happens to leverage technologies, which happen sometimes to be built on science. It's not that different here, except my renderer is an LLM and I am not making grids of pixels but tools which use pixels as part of a toolset. To be fair, I am in far less direct control than I was of my modeler or rendering software, and I am dealing with a radically more powerful and dangerous and fraught “agent.”

Professional creative tools are environments built over years by teams of programmers and mathematician-artists. This is just me and my li'l ol' supercomputers. Shucks. I mean, I haven't “shipped” a lot yet. Or even fully battle hardened any of it. But something I have learned so far that I hoped would be true: getting rid of the friction means I can almost competently learn about and practice doing something in many more fields than my non-AI self ever would or probably could. Maybe that's the Dunning-Kruger in me.

These apps, this cluster of components, are an argument about software design made by a human using software. What happens when a curious and determined designer with no programming ability but some domain expertise, strong aesthetic convictions, and a willingness not to sleep, directs an AI to build an entire creative practice in code? About thirty applications so far, half a million lines, and an ecosystem that maintains, critiques, and extends itself.

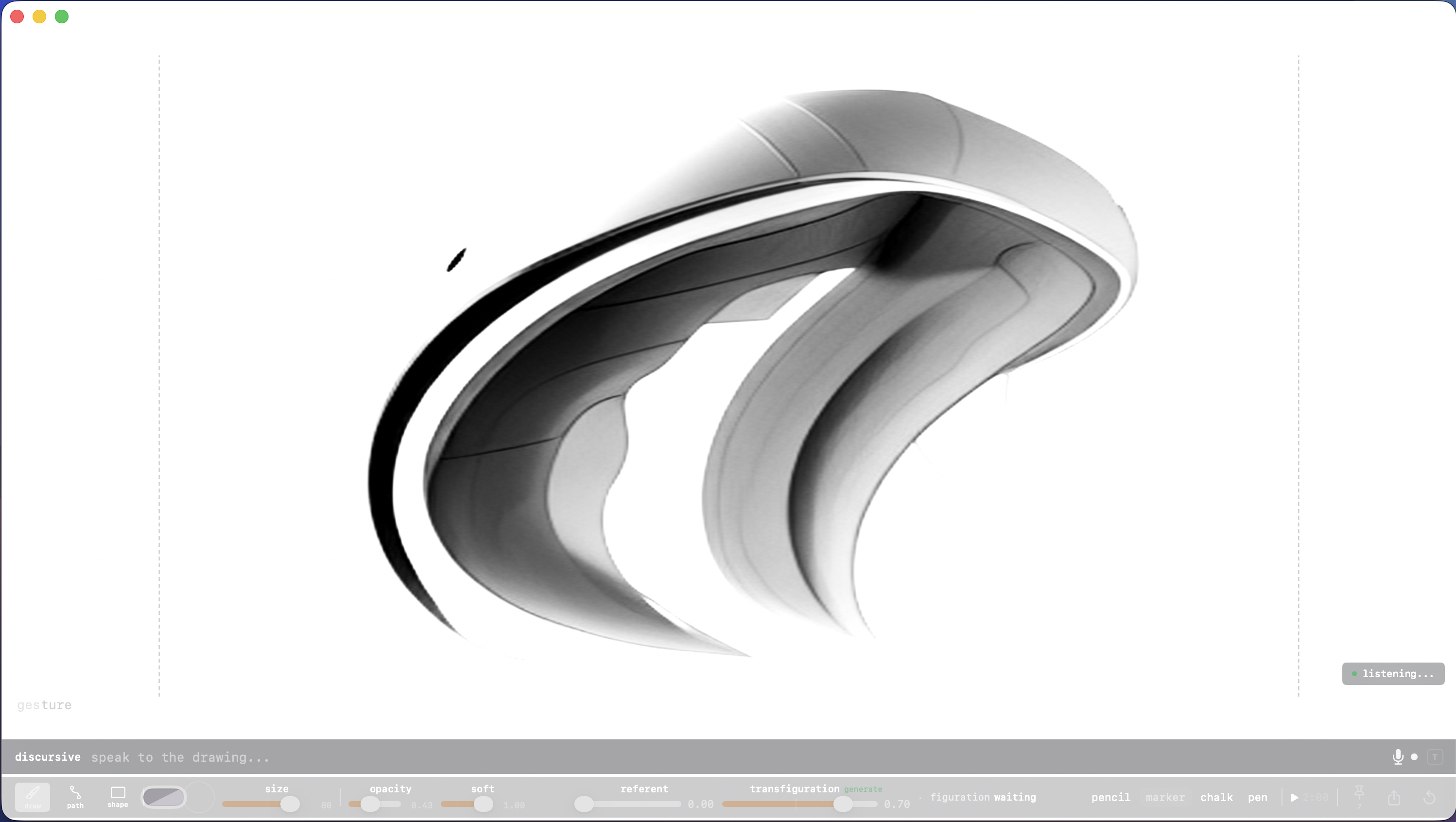

Draw-with-image, not prompt-to-image. A blank rectangle that waits for your first mark to dirty it up. 350-term design vocabulary, pressure-sensitive canvas, voice input. Every stroke is a commitment and every inference is a provocation.

MoreGesture is a blank rectangle that waits for your first mark to dirty it up. It refines it, you move a slider and your mark gets darker or disappears, you make another mark and the LLM refines or extends it. Activate text or voice input and your verbal prompts subtly adjust the drawing — if the transfiguration slider is high enough. Make it quicker, make it hotter, it's too damned sharp and it's about to cut me — help!

My largest app by code line count, which means little to me other than the tokens I burned to make it. macOS native, pressure-sensitive tablet capable. It only has a few brushes, it's not meant to compete with the big kids (yet.) But it can draw and edit vector splines and Bezier handles for precise editing if you want them. It uses Core ML Stable Diffusion, was trained with about eleven vernacular packs (but you can extend by feeding it your own drawings.) You can capture images for exporting out all at once. And like all my apps, gesture has a rich Concordance panel in addition to full help and tooltips.

You draw. Your voice adds design vocabulary while your hands stay on the surface. The transfiguration slider controls how much the model transforms your marks — from a whisper of refinement to aggressive reimagining, capped at 0.85 because your hand should always be visible in the result. Pin what you like. Export the batch. Repeat until the drawing is smarter than you expected.

Three input lanes. A figuration prompt overlay for direct prompt editing on canvas. A DisCursive bar with 350+ design vocabulary terms across twelve domains — elements, principles, edge character, composition, rendering, vernacular, affect. Voice input with on-device speech recognition, continuous cycling, 1.5-second silence auto-commit. Your hands never leave the surface.

The vocabulary engine. Not a chatbot. A design vocabulary. Say "aggressive contour, cross section, knife edge" and it knows exactly what that means without asking a cloud. 350 terms resolve instantly. Unknown phrases route through a local LLM for rewriting. Everything runs on your machine.

Brushes and splines. Pencil, marker, chalk, pen, blueline annotation (visible to you, invisible to the model), eraser. Pressure-sensitive. Catmull-Rom interpolation. Fifty levels of undo. Spline editing with anchor points and Bezier handles for when the gesture needs precision.

Modes for when you mean it. IcarusMode pushes divergence to the ceiling. StrangerMode starts cold with no conditioning. HeartattaCk sets every parameter to maximum. DayspringMode is a Konami Code easter egg. You'll know when you find it.

Behavioral word processor. Watches how you write, not what. Chromatic affect, register intelligence, a seminar of 24 literary voices.

Parametric shoe last design. The biomechanical argument about how a foot should behave inside a shoe.

Parametric automotive form explorer. The moment before commitment.

Parametric jewelry and ring design with embedded AI parameterization.

Expressive NURBS surface modeler. Deep, but hides its complexity.

3D mesh collage and metamashup. Nothing original, everything transformed.

Blobject creation engine. Looks like a toy. Is a toy. That is entirely the point.

Pixel-to-sculpture. Paint a 32x32 icon, extrude by value, smooth, paint with AI.

UltraParadoxical Visualization Engine. Both powerful and simple. Whether it succeeds is the kind of question it is designed to provoke.

Computational fluid design. Liquid as a design material. Spigot on, fluid flowing.

Fabric simulation and material science. Textiles analysis in powers of ten.

Native macOS renderer wrapping headless Blender. Structure enables surprise.

18-voice critical response instrument. Eighteen ways of looking at it.

Direct-manipulation sculpting for Rhino. The brush stroke as an echo of intent.

TPMS and lattice engine for Grasshopper. The math describes something that was already there.

Parametric tree support generator for Rhino. The forest abides.

Parametric gill louver generator for Rhino. Coral reef anatomy as ventilation logic.

Physical lab for Rhino. Describe a material, place a sprue, watch the cavity fill and the porosity form.

Wave interference surface sculptor. Digital spall: the artifacts of simulated force.

Text-to-3D inscription and excavation. Words given the weight of physical force.

Parametric tread and outsole design. The part of the shoe you destroy by walking in it.

Architectural inquiry tool. Small ensembles, precise voicing, every part audible.

Satirical pitch deck generator. The boundary between sincere specification and parody.

Application generator. Conceptual ambition that borders on hubris.

UI archaeology playground. 1200+ interface artifacts, compulsively hoarded.

A hybrid graphic design engine. The back of the page, where pixels and curves and fat little bodies are the same animal seen from different sides.

Multi-modal sensory 3D generation. Closer to playing an instrument than operating a tool.

Data intelligence surface. Hilariously unfinished. Feed it a file and watch it go to work.

Personal knowledge graph. Observatory, voice, archive — three apps merged into the thing that watches everything and talks back.